SysInternals Updater

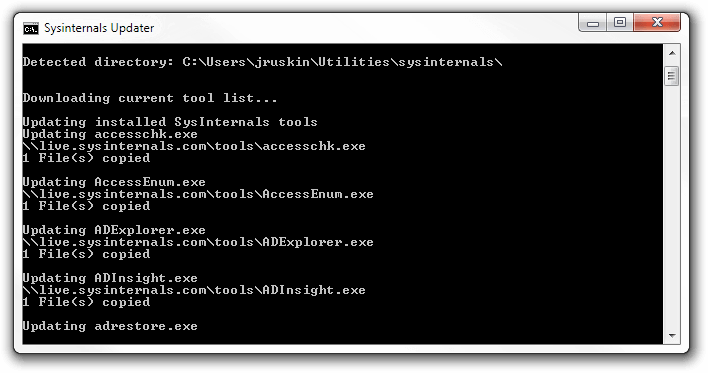

I was just running a delightful batch script written by Jason Faulkner to update my SysInternals tools directory.

It was taking forever! At least 2 seconds per tool (regardless of state), and a huge chunk of time to download the current tool list. It seems to download everything, regardless of whether or not it's up-to-date.

I thought it may be far quicker in PowerShell with some more up to date methods, so I sketched this out. It's far less robust (won't attempt to kill processes if they're in use), as I actually don't want that functionality.

function Update-SysInternalsTools {

[CmdletBinding()]

param(

[Parameter(Mandatory)]

$localDirectory

)

begin {

$localTools = Get-ChildItem $localDirectory -Filter *.exe

$onlineTools = Get-ChildItem '\\live.sysinternals.com\tools' -Filter *.exe

}

process {

foreach ($tool in $localTools) {

if ($tool.LastWriteTime -lt ($online = $onlineTools | ?{$_.Name -eq $tool.Name}).LastWriteTime) {

Write-Verbose "[$($tool.Name)] requires updating..."

try {

Invoke-WebRequest -Uri $online.FullName -OutFile $tool.FullName -ErrorAction Stop

}

catch {

Write-Warning "$($tool.Name) failed to update."

}

}

}

}

}

if ($MyInvocation.InvocationName -NE '.') {Update-SysInternalsTools}

I'll probably update the script shortly with kill-process-and-restart as an option (as well as a "download everything" argument, as it currently only updates tools that are present), but it seems to get through the listing far faster.

It's also currently just comparing LastWriteTime - it could actually grab the VersionInfo/FileVersion with Get-ItemProperty, but I thought that might add a very large chunk of (fairly unnecessary) delay. Will have to test it.